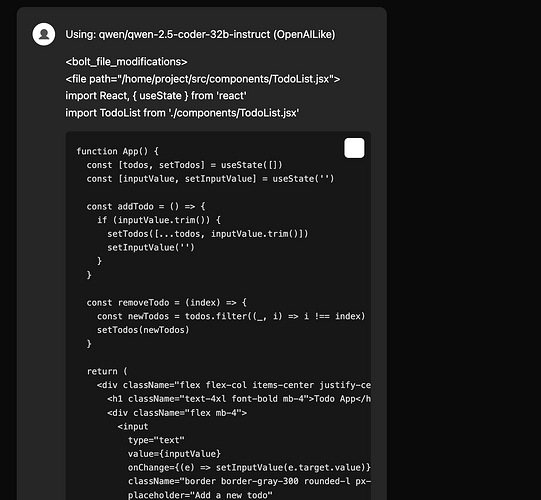

when a user updates the code it, then sends a message, it Prepends the diffs in the user message. and its better to have a parser that can decorate it in the UI or just hides it from the UI

I don’t have it handy right now, I’ll post it tomorrow AM, but spent a good amount of time last night on the uploadZip equivalent of downloadZip. It uses the existing JSZip functionality in order to map the entire zip text/binary contents to a true FileMap, which is the group of file adds that I think the editor workbench needs to function.

I’m curious about your thoughts specifically about once the filepath keys & content are in place, which workbench method(s) each file would have to go through in order to help maintain the chat history. Selfishly, the ability to upload a large example project (allowing for ignore of node_modules, various .folders etc) I feel should require zero impact on LLM token usage, while also being available as context in the user/assistant chat.

Thoughts? ![]() Obviously also looking for input from @wonderwhy.er. I don’t want to create an additional discussion about project import & sync as a whole, but having all of the project zip content ready to go I’m just very curious which one/many

Obviously also looking for input from @wonderwhy.er. I don’t want to create an additional discussion about project import & sync as a whole, but having all of the project zip content ready to go I’m just very curious which one/many workbench.ts calls would be made to update the internal context with a full starter project.

writing a new file would require directly calling webcontainer write function. its what the action runner is doing.

as for context, its tricky as the context needs to be a message on chat which will cause an issue if that message is being displayed to the user. in one of my pr i have added a ‘annotations’ as ‘toolResponse’ in the message where i was use that message for llm context but didn’t want to show that to the user.

and in the UI I was just not showing ant message that has ‘toolResponse’ annotations

I think this discussion deserves a little group call, it seems like we’re all on the same page and it’s just a matter of proper logistics to get the feature in. ![]()

about the token usage. i dont think we can avoid that if we want the llm to know all the content of the imported project.

i have tested with providing only the file paths in the context

doesn’t works well, this needs a separate context management feature i was talking about in another chat, where we will up load the project but in the context only provide the file paths

and there will be a context buffer, where then the llm can choose which are the files it wants to load before writing any code. and this buffer has a fixed size which the llm has to intelligently manage based on its current task

I love the idea of a context buffer. Thanks again for all of your thoughts about these priority features. ![]()

Also my bad @thecodacus, I just processed your comment about decoration and presentation in the chat panel; We def need to clean up the bolt modification message, but also I want to standardize the appearance of messages/actions in chat for sure. I bet that is annoying you as well, haha