There was an error processing your request: Custom error: prompt is too long: 212293 tokens > 204648 maximum

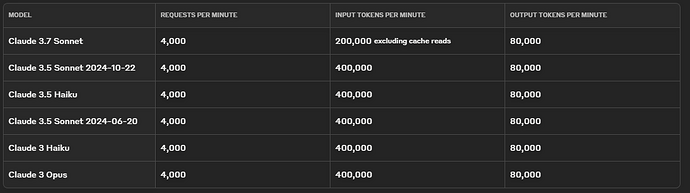

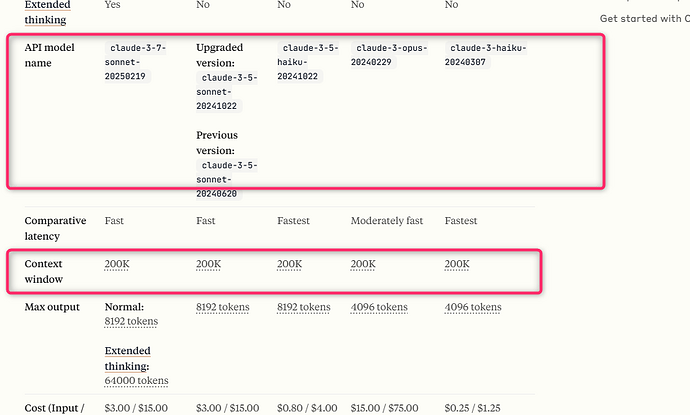

You cant change the maximum context size for cloud models.

Make sure you have context optimization on and you are on the latest release of bolt.diy, which should reduce the token usage.

If you still got the error, cause your project is gigantic, then you need to find another model which supports more context size, e.g. Google has model supporting up to 2M context size.

ohhh yeah you are right